You will start exploring ML model monitoring tools if you eventually deploy models to production. All you need is an insight into “how things operate” when the ML models have an influence on the company, which they should.

When something stops operating, that’s when you first notice it. Without a model monitoring setup, you might not know what’s wrong or where to go for issues and fixes. And they want you to address this right away.

- Speaking with several ML teams and learning this information directly is one advantage of working for an MLOps firm.

- Watch how well your model performs in practice and how accurately it makes predictions.

- Check to see if the model’s performance deteriorates with time.

- Keep an eye on the model’s input/output data distribution and characteristics to determine whether it has changed.

- Has the distribution of classes projected throughout time changed? The data and notion drift may be related to such items.

Ml model monitoring tools and retraining involve looking at learning curves, trained prototype prediction distributions, or confusion matrices.

- Keep track of model testing and assessment: record metrics, graphs, forecasts, and other information for your automated testing or evaluation. pipelines

- Check the hardware metrics to see how much your models’ CPU, GPU, or memory are used for training and inference.

- Check CI/CD pathways for ML: Visually compare the results of your CI/CD pipeline tasks’ assessments.

In ML, the measurements frequently only provide a limited amount of information; someone must see actual outcomes.

- Arize

This ML model monitoring software may improve your project’s observability and assist you in debugging production AI.

Engineers may spend days attempting to find possible issues if the ML team has been working without a robust human perception and real-time analytics platform. Arize AI attracted $19 million in funding since it makes it simple to identify what went wrong, enabling software programmers to uncover and address issues right away.

- Neptune

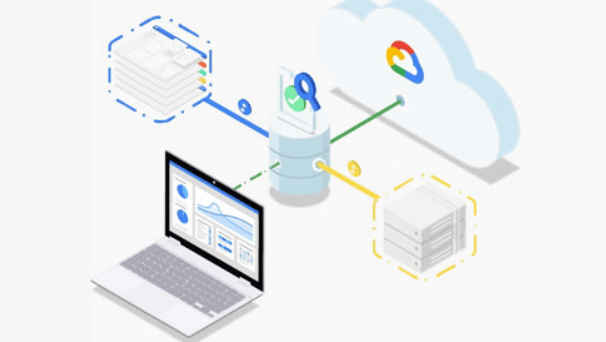

A metadata repository for MLOps called Neptune was created for development and manufacturing teams who do several experiments. Almost any ML info may be logged and shown via metrics.

Almost any ML metadata, including measurements and losses, prediction pictures, hardware measurements, and interactive visualizations, may be logged and shown.

- Prometheus

SoundCloud originally created Prometheus, a well-known open-source machine learning model monitoring tool, to gather high-dimensional data and inquiries.

Prometheus has a number of exporters and client libraries and is tightly integrated with Kubernetes. It also has a quick query language. Prometheus is also compatible with Docker and accessible through the Docker Hub.

- WhyLabs

WhyLabs is a model observability and monitoring tool that aids ML teams in keeping track of data centers and ML applications. To proactively solve this problem, tracking the model’s performance as it is implemented is essential. The right period of time and regularity for retraining may be chosen.

The response capabilities and regularity for learning and refreshing the model may be decided. It aids in detecting data bias, data drift, and data quality degradation. Since it is simple to use in blended teams where experienced developers and more junior staff members collaborate, WhyLabs has quickly gained much popularity among developers.

- Census

A platform for AI model observability called Census allows you to keep an eye on the whole ML pipeline, explain forecasts, and proactively address problems for better business outcomes.

Now that you understand how to assess solutions for ML model monitoring tools and maintenance and what is available, the best approach is to try out the one you liked!