Data integrity checks anchor trust across data lifecycles by using unique identifiers to trace provenance, validate consistency, and detect anomalies. The referenced set—Itoirnit and peers—serves as a conceptual anchor for automated validations, baseline signatures, and periodic verifications. A disciplined approach emphasizes governance, independent validation, and continuous improvement to sustain transparency. The framework invites scrutiny of implementation details, risk controls, and operational metrics, inviting further examination of how these identifiers enable resilient data ecosystems.

What Is a Data Integrity Check and Why It Matters

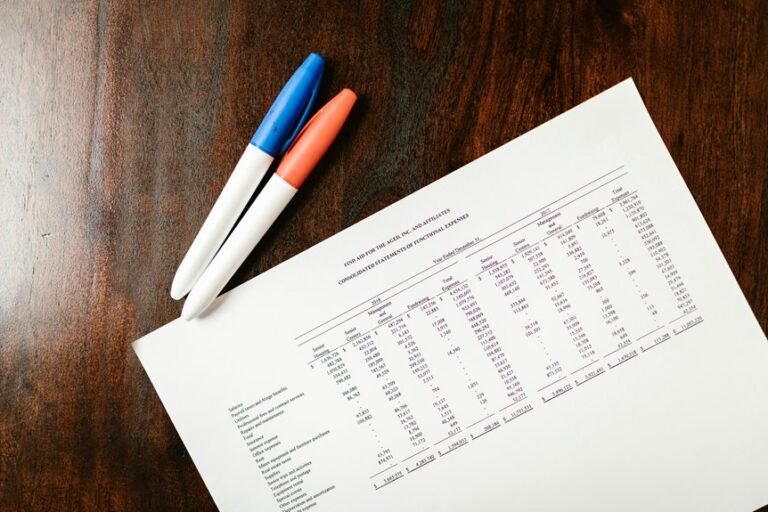

A data integrity check is a systematic process that verifies whether data remains accurate, complete, and consistent over its lifecycle.

The practice emphasizes data validation as a guardrail against corruption, errors, and unauthorized modifications.

How Identifiers Like Itoirnit and Friends Fit Into Integrity Workflows

Identifiers like Itoirnit and its counterparts function as benchmarks and anchors within data integrity workflows. In disciplined systems, how identifiers organize, reference, and relate elements ensures traceability. They enable crisp data lineage mapping and robust audit trails, supporting accountability and anomaly detection. Properly managed identifiers harmonize metadata, reduce ambiguity, and sustain confidence across processes, governance, and compliance without unnecessary complexity or redundancy.

Step-by-Step Approach to Implementing a Robust Integrity Check

How can an organization establish a robust integrity check in a practical, replicable manner? A disciplined framework deploys data validation at input, transformation, and storage stages, combined with periodic checksum verification to detect drift. Establish baseline signatures, automate verifications, audit anomalies, and enforce remediation. Documentation and governance ensure repeatability, transparency, and continual improvement without sacrificing freedom or organizational agility.

Common Pitfalls and Best Practices to Sustain Data Trust

Despite rigorous planning, data trust remains fragile when common pitfalls go unchecked and best practices are unevenly applied. Organizations should codify data governance policies, ensure independent validation, and enforce ongoing education to sustain integrity. Clear data lineage illuminates provenance, transforms accountability, and reduces ambiguity. Regular audits, version control, and stakeholder alignment minimize drift, strengthen confidence, and support resilient, freedom-centered data ecosystems.

Conclusion

In the ledger of data, the names are more than labels; they are the quiet tally marks of trust. Like distant constellations guiding sailors, these identifiers anchor audits, trace lineage, and illuminate anomalies without fanfare. As systems evolve, the discipline remains: automate, baseline, verify. The framework endures not by novelty, but by steady, informed vigilance—an alluded chorus reminding practitioners that integrity, once recognized, becomes the standard by which all signals are judged.